All digital oscilloscopes measure by sampling the analog input signals and digitizing the values.

Contents

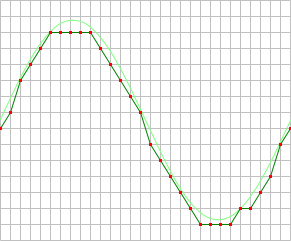

Sampling

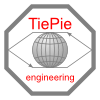

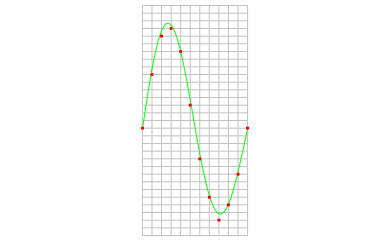

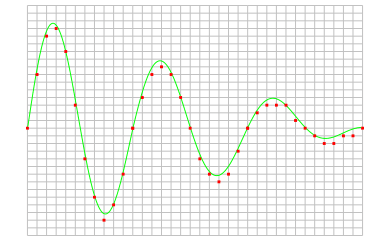

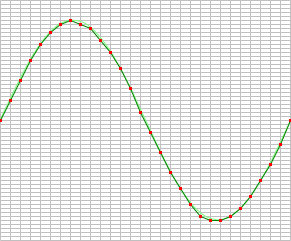

When an oscilloscope samples an input signal, samples are taken at fixed intervals. At these intervals, the size of the input signal is converted to a number. The accuracy of this number depends on the resolution of the oscilloscope. The higher the resolution, the smaller the voltage steps in which the input range of the instrument is divided. The acquired numbers can be used for various purposes, e.g. to create a graph.

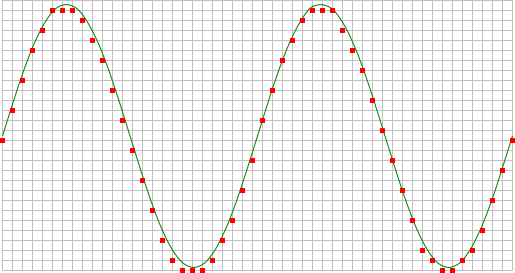

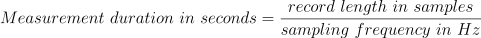

The sine wave in the above picture is sampled at the dot positions. By connecting the adjacent samples, the original signal can be reconstructed from the samples. You can see the result in the next illustration.

Sample frequency

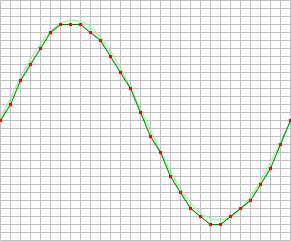

The rate at which samples are taken by the oscilloscope is called the sample frequency, the number of samples per second. A higher sample frequency corresponds to a shorter interval between the samples. As is visible in the picture below, with a higher sample frequency, the original signal can be reconstructed much better from the measured samples.

The sample frequency must be higher than 2 times the highest frequency in the input signal. This is called the Nyquist frequency. Theoretically it is possible to reconstruct the input signal with more than 2 samples per period. In practice, at least 10 to 20 samples per period are recommended to be able to examine the signal thoroughly in an oscilloscope. When the sample frequency is not high enough, aliasing will occur.

Changing the sample frequency of an instrument in the Multi Channel oscilloscope software can be done in various different ways:

-

Clicking the Sample frequency indicator

on the channel toolbar and selecting the required sample frequency

from the popup menu

on the channel toolbar and selecting the required sample frequency

from the popup menu

-

Opening the instrument settings dialog using the

Instrument settings dialog button and selecting the required sample frequency in the dialog.

Instrument settings dialog button and selecting the required sample frequency in the dialog.

-

Clicking the decrease/increase sample frequency buttons

and

and

on the instrument toolbar

on the instrument toolbar

- Using hotkeys F3 (lower) and F4 (higher).

-

Clicking the Sample frequency label in the combined Record length + Sample frequency +

Resolution indicator

on the instrument toolbar and selecting the

required value from the popup menu.

on the instrument toolbar and selecting the

required value from the popup menu.

- Right-clicking the horizontal scrollbar under the graph displaying signals from the instrument and selecting Sample frequency and then the appropriate value in the popup menu.

- Right-clicking the instrument in the Object Tree, selecting Sample frequency and then the appropriate sample frequency value in the popup menu

Aliasing

When sampling an analog signal with a certain sampling frequency, signals appear in the output with frequencies equal to the sum and difference of the signal frequency and multiples of the sampling frequency. For example, when the sampling frequency is 1000 Hz and the signal frequency is 1250 Hz, the following signal frequencies will be present in the output data:

| Multiple of sampling frequency | 1250 Hz signal | -1250 Hz signal | ||

|---|---|---|---|---|

| ... | ||||

| -1000 | -1000 + 1250 = | 250 | -1000 - 1250 = | -2250 |

| 0 | 0 + 1250 = | 1250 | 0 - 1250 = | -1250 |

| 1000 | 1000 + 1250 = | 2250 | 1000 - 1250 = | -250 |

| 2000 | 2000 + 1250 = | 3250 | 2000 - 1250 = | 750 |

| ... | ||||

As stated before, when sampling a signal, only frequencies lower than half the sampling frequency can be reconstructed. In this case the sampling frequency is 1000 Hz, so we can we only observe signals with a frequency ranging from 0 to 500 Hz. This means that from the resulting frequencies in the table, we can only see the 250 Hz signal in the sampled data. This signal is called an alias of the original signal.

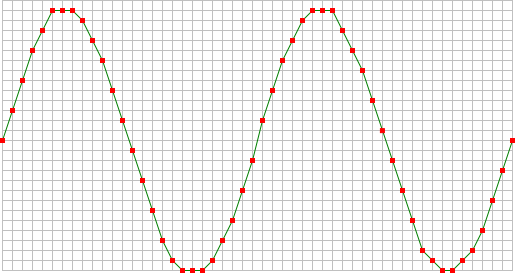

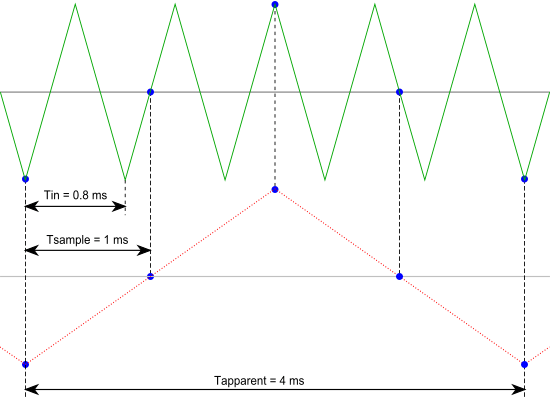

If the sampling frequency is lower than 2 times the frequency of the input signal, aliasing will occur. The following illustration shows what happens.

In this picture, the green input signal (top) is a triangular signal with a frequency of 1.25 kHz. The signal is sampled with a frequency of 1 kHz. The corresponding sampling interval is 1/( 1000 Hz ) = 1 ms. The positions at which the signal is sampled are depicted with the blue dots.

The red dotted signal (bottom) is the result of the reconstruction. The period time of this triangular signal appears to be 4 ms, which corresponds to an apparent frequency (alias) of 250 Hz (1.25 kHz - 1 kHz).

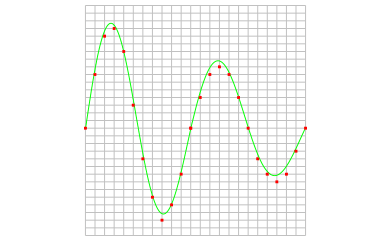

In practice, to avoid aliasing, always start measuring at the highest sampling frequency and lower the sampling frequency if required. Use function keys F3 (lower) and F4 (higher) to change the sampling frequency in a quick and easy way. The next illustration gives an example of what aliasing can look like.

In this picture, a sine wave signal with a frequency of 257 kHz is sampled at a frequency of 50 kHz. The minimum sampling frequency for correct reconstruction is 514 kHz. For proper analysis, the sampling frequency should have been approximately 5 MHz.

Record Length

With a given sampling frequency, the number of samples that is taken determines the duration of the measurement. This number of samples is called record length. Increasing the record length, will increase the total measuring time. The result is that more of the measured signal is visible. In the images below, three measurements are displayed, one with a record length of 12 samples, one with 24 samples and one with 36 samples.

The total duration of a measurement can easily be calculated, using the sampling frequency and the record length:

Changing the record length of an instrument in the Multi Channel oscilloscope software can be done in various different ways:

-

Clicking the Record length indicator

on the

instrument toolbar and selecting the required value from the popup menu.

on the

instrument toolbar and selecting the required value from the popup menu.

-

Opening the instrument settings dialog using the

Instrument settings dialog button and selecting the required record length in the dialog.

Instrument settings dialog button and selecting the required record length in the dialog.

-

Clicking the decrease/increase record length buttons

and

and

on the instrument toolbar.

on the instrument toolbar.

- Using hotkeys F11 (shorter) and F12 (longer).

-

Clicking the Record length label in the combined Record length + Sample frequency + Resolution indicator

on the instrument toolbar and selecting the required value from the popup menu.

on the instrument toolbar and selecting the required value from the popup menu.

- Right-clicking the horizontal scrollbar under the graph displaying signals from the instrument and selecting Record length and then the appropriate value in the popup menu.

- Right-clicking the instrument in the Object Tree, selecting Record length and then the appropriate value in the popup menu.

Time base

The combination of sampling frequency and record length forms the time base of an oscilloscope. To setup the time base properly, the total measurement duration and the required time resolution have to be taken in account.

There are several ways to find the required time base setting. With the required measurement duration and sampling frequency, the required number of samples can be determined:

With a known record length in samples and the required measurement duration, the necessary sampling frequency can be calculated:

In the Multi Channel oscilloscope software, both record length and sampling frequency can be set independently, to give the best flexibility. They can be selected from menu's, using toolbar buttons but also keyboard short cuts are available. For more information, refer to:

The Multi Channel oscilloscope software also provides controls to change record length and sample frequency simultaneously to specific combinations to obtain certain time/div values:

-

Clicking the decrease/increase Time/div buttons

and

and

on the instrument toolbar.

on the instrument toolbar.

-

Clicking the Time/div indicator

on the instrument toolbar and selecting the required time/div value from the popup menu.

on the instrument toolbar and selecting the required time/div value from the popup menu.

-

Opening the instrument settings dialog using the

Instrument settings dialog button and selecting the required Time base setting in the dialog.

Instrument settings dialog button and selecting the required Time base setting in the dialog.

- Using hotkeys Ctrl + F11 (lower) and Ctrl + F12 (higher)

Because a certain time/div setting can be created from different combinations of record length and sampling frequency, the Multi Channel oscilloscope software must decide which combination is used. The software will try to use the highest sample frequency possible and will adjust the record length accordingly to obtain the required time/div setting.

To avoid the record length becoming this long that collecting the measured values takes longer resulting in the scope responding slower, the record length for time/div settings is limited to a value that can be set in the program settings. When the time/div setting is adjusted by one of the corresponding controls, the maximum record length is limited to this value.

This limit does not apply to manually adjusting the record length.

Resolution

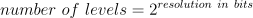

When digitizing the samples, the voltage at each sample time is converted to a number. This is done by comparing the voltage with a number of levels. The resulting number is the number of the highest level that's still lower than the voltage. The number of levels is determined by the resolution. The higher the resolution, the more levels are available and the more accurate the input signal can be reconstructed. In the image below, the same signal is digitized, using three different amounts of levels: 16, 32 and 64.

The number of available levels is determined by the resolution:

The used resolutions in the previous image are respectively: 4 bits, 5 bits and 6 bits.

The smallest detectable voltage difference depends on the resolution and the input range. This voltage can be calculated as:

In the 200 mV range, the full scale ranges from -200 mV to +200 mV, the full range is 400 mV. When a 12 bit resolution is used, there are 212 = 4096 levels. This results in a smallest detectable voltage step of 0.400 V / 4096 = 97.7 µV. In 16 bit resolution this step is 0.400 V / 65536 = 6.1 µV

Enhanced resolution

An instrument will have one or more native resolutions, where the resolution is natively present in the Analog to Digital Converter (ADC). Higher resolutions will usually have lower maximum sampling rates.

Additionally, instruments can have one or more enhanced resolutions. These are resolutions that are not present on the ADC, but are created in the instrument using oversampling techniques. As a result of the oversampling technique, the maximum sampling rate at the enhanced resolution is lower.

Resolution mode

As the selected resolution may affect the maximum sample frequency, a resolution mode is available that determines the resolution for the instrument, based on the selected sample frequency. The resolution mode supports the following settings:

- Fixed: the resolution of the oscilloscope is fixed and will not be changed by the software, based on the selected sample frequency.

- Auto non-enhanced: the software sets the resolution of the oscilloscope automatically to the highest possible resolution for the current sample frequency, using only the ADC's native resolutions and not the enhanced resolution(s).

- Auto all: the software sets the resolution of the oscilloscope automatically to the highest possible resolution for the current sample frequency, including enhanced resolution(s).

Controlling the resolution

Changing the resolution of an instrument in the Multi Channel oscilloscope software can be done in various different ways:

-

Opening the instrument settings dialog using the

Instrument settings dialog button and selecting the required resolution in the dialog.

Instrument settings dialog button and selecting the required resolution in the dialog.

- Right-clicking the instrument in the Object Tree, selecting Resolution and then the appropriate resolution value in the popup menu

-

Clicking the decrease/increase resolution buttons

and

and

on the instrument toolbar

on the instrument toolbar

-

Clicking the resolution indicator

on the instrument toolbar and selecting the required resolution from the popup menu

on the instrument toolbar and selecting the required resolution from the popup menu